It’s been some time since I produced a HttpClient related blog post. This one has been on my list to complete for quite a while. I want to cover something pretty important which happened in .NET Core 2.1 with regard to lifetime management of HTTP connections.

TL;DR;

HttpClient in .NET Core (since 2.1) performs connection pooling and lifetime management of those connections. This supports the use of a single HttpClient instance which reduces the chances of socket exhaustion whilst ensuring connections re-connect periodically to reflect DNS changes.

Recapping the History of HttpClient

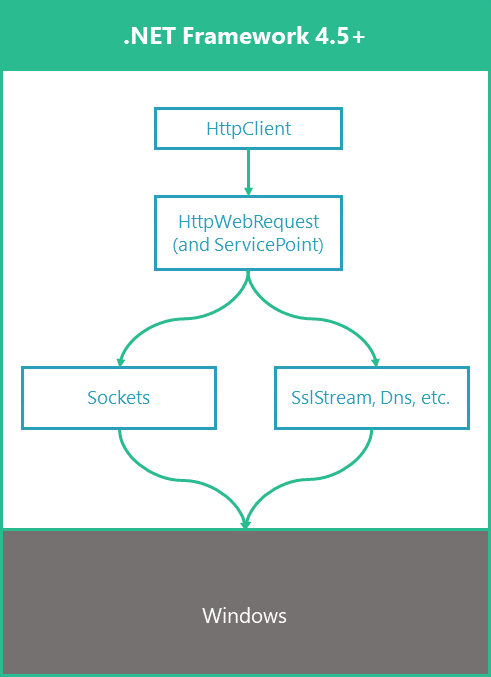

HttpClient initially started its life as a NuGet package which could optionally be included in .NET Framework 4.0 projects. In .NET Framework 4.5 it was provided in the box as part of the BCL (Base Class Library). This was built on top of the pre-existing HttpWebRequest implementation. In .NET Framework, the ServicePoint APIs could be used to control and manage HTTP connections, including setting a connection lifetime by configuring the ConnectionLeaseTimeout for an endpoint.

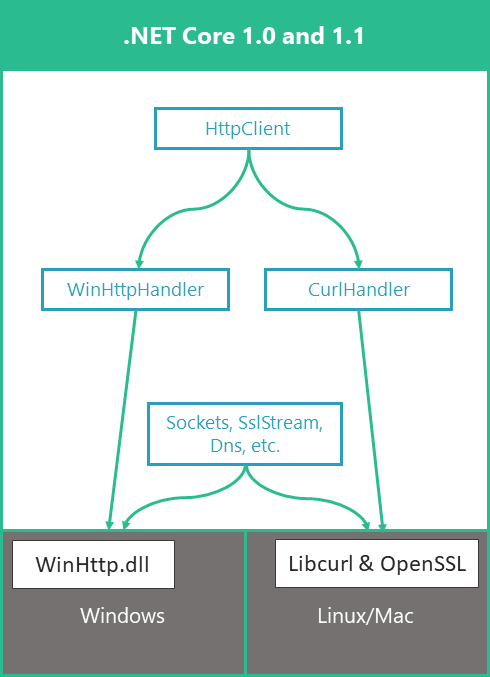

Rolling forward; .NET Core 1.0 was initially released in June 2016. This first version was limited to a much smaller API surface than was available in .NET Framework, mostly focused towards building ASP.NET Core web applications. An API wich has been available since .NET Core 1.0 is HttpClient. However, APIs for HttpWebRequest and ServicePoint were not included. HttpClient in .NET Core 1.0 was built directly on top of OS platform APIs which use unmanaged code, WinHTTP for Windows and LibCurl for Linux and Mac.

By August 2016 it was soon noted that the recommendation to re-use HttpClient instances to prevent socket exhaustion had one quite troublesome side-effect. Oren Novotny opened what became a very long-running GitHub issue entitled “Singleton HttpClient doesn’t respect DNS changes“. In this issue, it was acknowledged that re-using a single HttpClient instance would result in connections remaining open indefinitely and as a result, DNS changes may either cause a request failure or communication with outdated endpoints.

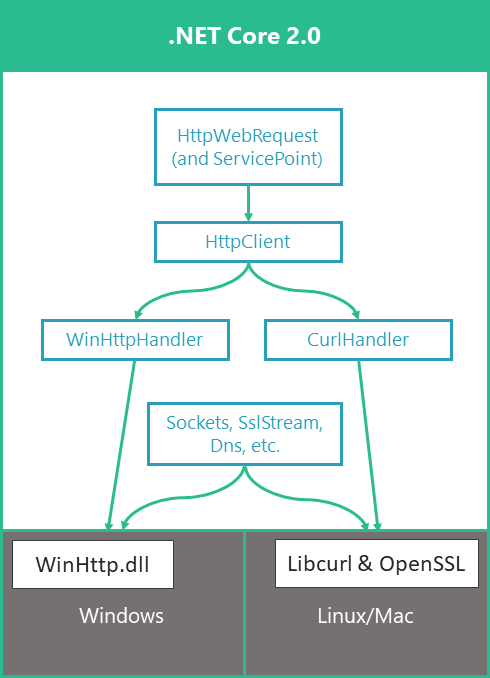

In .NET Core 2.0, HttpWebRequest was added back in to support .NET Standard 2.0. This sits on top of the HttpClient implementation, the reverse of how this works in .NET Framework 4.5+. ServicePoint was also added, although much of its API surface is either not implemented (throwing a PlatformNotSupportedException) or having no implementation, nor effect.

Changes Since .NET Core 2.1

This problematic behaviour led to two pieces of work from team different teams. The ASP.NET team started working on the Microsoft.Extensions.Http package, of which the primary feature is IHttpClientFactory. This opinionated factory for HttpClient instances also includes lifetime management of the underlying HttpMessageHandler chains. If you want to read more about this feature, you can review my series of blog posts, where I cover this extensively.

The IHttpClientFactory feature was released as part of ASP.NET Core 2.1 and for many was a good compromise, solving connection re-use along with lifetime management.

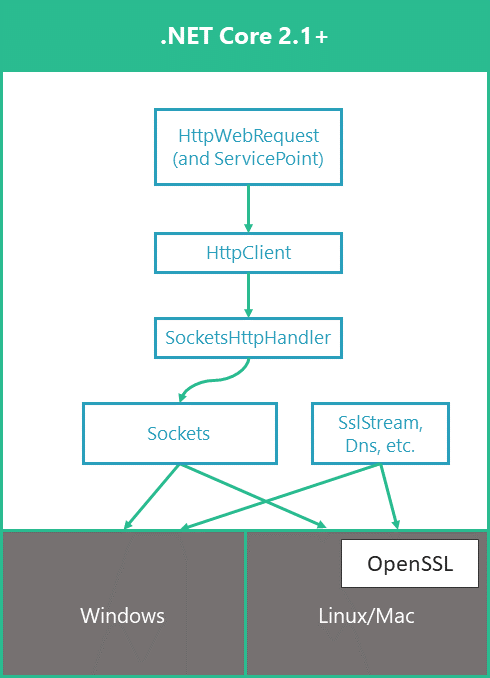

In the same timeframe, the .NET team were working on a solution of their own. Also released in .NET Core 2.1, the team introduced a new SocketsHttpHandler at the heart of the handler chain for HttpClient. This handler was built directly on top of the Socket APIs, implementing HTTP in managed code. Part of this work included a connection pooling system and the ability to set maximum lifetimes for those connections. This feature will be the focus of the remainder of this post.

But before we get to that, I want to point out that while SocketsHttpHandler was enabled by default from .NET Core 2.1, the implementation was limited to HTTP/1.1 communication. Those requiring HTTP/2 had to disable the feature and use the older handler chain which relied as before on unmanaged code and did not include connection pooling.

Fortunately, this limitation has been removed in .NET Core 3.0, and HTTP/2 support is now available. This should make the use of HttpClient based on the SocketsHttpHandler chain suitable for all.

What is Connection Pooling?

The SocketsHttpHandler establishes a pool of connections for each unique endpoint which your application makes an outbound HTTP request to via HttpClient. On the first request to an endpoint, when no existing connections exist, a new HTTP connection will be established and used for the request. Once that request completes, the connection is left open and is returned into the pool.

Subsequent requests to the same endpoint will attempt to locate an available connection from the pool. If there are no free connections and the connection limit for that endpoint has not been reached, a new connection will be established. Once the connection limit is reached, requests are held in a queue, until a connection is free to send them.

I’ve been diving into the internal code for this implementation and may produce a more in-depth analysis of the pooling behaviour in a future blog post.

How to Control Connection Pooling

There are three main settings which can be used to control connection pooling behaviour.

PooledConnectionLifetime, defines how long connections remain active when pooled. Once this lifetime expires, the connection will no longer be pooled or issued for future requests.

PooledConnectionIdleTimeout, defines how long idle connections remain within the pool while unused. Once this lifetime expires, the idle connection will be cleaned up and removed from the pool.

MaxConnectionsPerServer, defines the maximum number of outbound connections which will be established per endpoint. Connections for each endpoint are pooled separately. For example, if the value of the max connections is 2, and your application sends requests to both www.github.com and www.google.com, there may be up to 4 open connections in total.

By default, from .NET Core 2.1 onwards, the SocketsHttpHandler is used as an inner handler by the higher level HttpClientHandler. Without any custom configuration, the default settings for connection pooling apply.

The PooledConnectionLifetime default is infinite, so whilst regularly used for requests, connections may remain open indefinitely. The PooledConnectionIdleTimeout defaults to 2 minutes, with connections being cleaned up if they sit unused in the pool for longer than that period of time. MaxConnectionsPerServer defaults to int.MaxValue and therefore are connections are essentially unrestricted.

If you wish to control any of these values, an instance of SocketsHttpHandler can be created manually and configured as necessary.

In the preceding example, the SocketsHttpHandler is configured such that connections will cease to be re-issued and be closed after a maximum of 10 minutes. If idle for 5 minutes, the connections will be removed earlier by the clean up process for the pool. We have also limited the maximum number of connections (per endpoint) to ten. Should we need to make more outbound requests in parallel, some requests may be queued until connections, from the pool of 10, become available for use.

To apply the handler, it is passed into the constructor for the HttpClient.

Testing Connection Lifetime

Take for example this sample program:

Using the settings we have just discussed, this code makes 5 requests in sequence to the same endpoint. Between each request, it pauses for two seconds. The code also outputs a IPv4 address retrieved from DNS for the Google server. We can use this IP address to review the connections which are open to it by using the netstat command, issued in PowerShell:

netstat -ano | findstr 216.58.211

In my case, the output from this command is:

TCP 192.168.1.139:53040 216.58.211.164:443 ESTABLISHED 20372

We can see, that in this case, only 1 connection is opened to the remote endpoint. After each request, that connection is returned to the pool and is therefore available for re-use when the next request is issued.

If we change the lifetime for connections so that they expire after 1 second, we can test how this effects the behaviour:

TCP 192.168.1.139:53115 216.58.211.164:443 TIME_WAIT 0

TCP 192.168.1.139:53116 216.58.211.164:443 TIME_WAIT 0

TCP 192.168.1.139:53118 216.58.211.164:443 TIME_WAIT 0

TCP 192.168.1.139:53120 216.58.211.164:443 TIME_WAIT 0

TCP 192.168.1.139:53121 216.58.211.164:443 ESTABLISHED 25948

In this case, we can see that five connections were used. The first four of these were removed from the pool after 1 second, so were not available for re-use on the next request. As a result, each request has opened a new connection. The original connections are now in a TIME_WAIT state and unavailable for re-use by the OS for new outbound connections. The final connection is shown as ESTABLISHED becuase I caught it before it had expired.

Testing Max Connections

For the next test case, we’ll use the following program:

The code limits the MaxConnectionsPerServer to 2. It then starts 200 Tasks, each of which issue an HTTP request to the same endpoint. These Tasks will run concurrently. The time taken for all requests to compete is written to the console.

After running this on my machine the output is:

8013ms taken for 200 requests

If we view the connections using netstat, we can see two established connections, as per the limit we defined.

TCP 192.168.1.139:52780 216.58.204.36:443 ESTABLISHED 16076

TCP 192.168.1.139:52780 216.58.204.36:443 ESTABLISHED 16076

If we adjust this code to allow MaxConnectionsPerServer = 10, we can re-run the application. This time the time taken is around 4 times less.

2123ms taken for 200 requests

When we view the connections, we can see that indeed, ten connections were established.

TCP 192.168.1.139:52798 216.58.204.36:443 ESTABLISHED 30856

TCP 192.168.1.139:52799 216.58.204.36:443 ESTABLISHED 30856

TCP 192.168.1.139:52800 216.58.204.36:443 ESTABLISHED 30856

TCP 192.168.1.139:52801 216.58.204.36:443 ESTABLISHED 30856

TCP 192.168.1.139:52802 216.58.204.36:443 ESTABLISHED 30856

TCP 192.168.1.139:52803 216.58.204.36:443 ESTABLISHED 30856

TCP 192.168.1.139:52804 216.58.204.36:443 ESTABLISHED 30856

TCP 192.168.1.139:52805 216.58.204.36:443 ESTABLISHED 30856

TCP 192.168.1.139:52806 216.58.204.36:443 ESTABLISHED 30856

TCP 192.168.1.139:52807 216.58.204.36:443 ESTABLISHED 30856

As a result the throughput is improved. We allowed more outbound connections and so the queue of requests can be processed more quickly, with more requests being issued in parallel over the extra connections.

Do I Still Need IHttpClientFactory?

This is a very logical question which may arise as the result of this post. One of the features of IHttpClientFactory is the lifetime management of HttpMessageHandler chains and as a result, also of the underlying connections. Armed with the knowledge that HttpClient and SocketsHttpHandler can achieve the same effect, do we need to bother with IHttpClientFactory?

My view is that IHttpClientFactory has other benefits beyond helping manage connection lifetimes and still adds value when making outbound HTTP requests. It provides a great pattern to define logical configurations for HttpClient instances using the named or typed client approaches. The later, typed clients is a personal favourite of mine.

The fluent configuration approach to these logical clients also makes the use of custom DelegatingHandlers with clients very straightforward and clear. This includes the extension of this approach by the ASP.NET team to integrate with Polly in order to easily apply resiliency and transient fault handling for outbound requests.

Even without the lifetime management piece, I expect to use the factory in my applications for some time to come. From discussions I’ve seen online, it’s quite possible that in future releases, the lifetime management functionality will be deprecated and/or removed from IHttpClientFactory, since the problem it was solving is no longer applicable.

Summary

In this post we saw that since .NET Core 2.1 was released, when using the default SocketsHttpHandler implementation, that connection pools are maintained. Using the settings for the pools we can control the lifetime of connections and limit the number of outbound connections which may be created per endpoint.

We also discussed, that IHttpClientFactory has advangtages and features beyond just connection lifetime management, and is therefore still a valuable tool.

Credits

The diagrams in this post are redrawn, modified slightly and updated based on some originally used by Karel Zikmund, software engineering manager on the .NET team at Microsoft.

Have you enjoyed this post and found it useful? If so, please consider supporting me: